Prerequisites

- RCAC cluster account (apply here)

- Computer with internent connection

- Programs: A web browser (for Open OnDemand and Globus), Terminal/Putty/PowerShell

Prerequisites

What you will learn

This guide covers the day-one essentials for biologists using RCAC clusters. It uses Gautschi as the example cluster throughout, but the same patterns apply to Negishi, Bell, and Gilbreth. Just swap the cluster name in URLs and paths.

By the end, you will be able to log in, load software, submit a job, check your storage, and move data to and from the cluster.

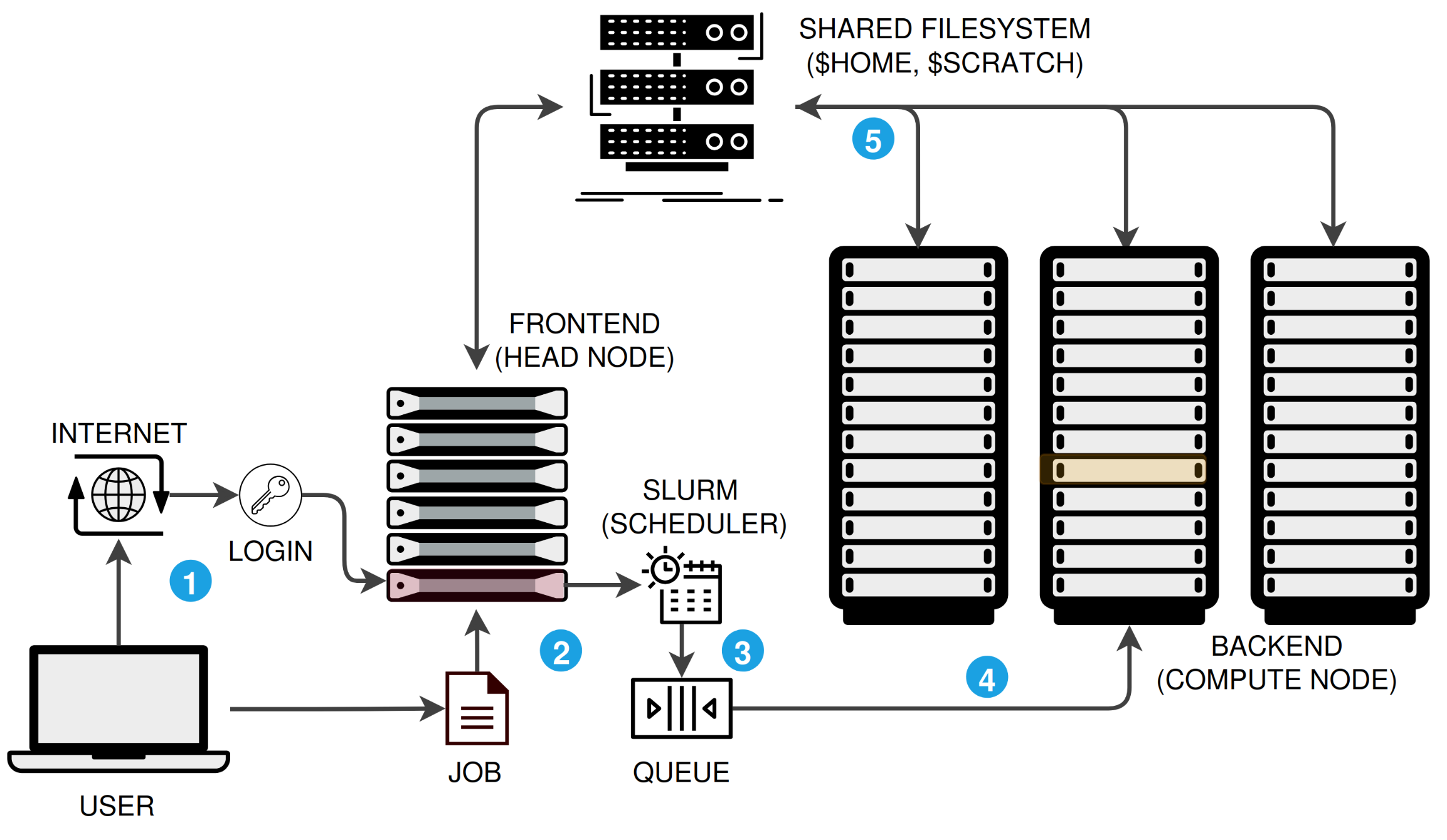

An HPC cluster is not a single computer. It is a collection of networked machines that work together, managed by a job scheduler. Here is the typical workflow:

$HOME, $RCAC_SCRATCH), so your data is accessible everywhere.There are three ways to connect. All three land you on the same login nodes with access to the same filesystems and software.

| Method | Best for | Requires installation? |

|---|---|---|

| Open OnDemand (OOD) | Browser-based access, file browsing, interactive apps | No |

| SSH | Command-line power users, scripting, automation | No (built into macOS/Linux); PuTTY or WSL on Windows |

| ThinLinc | Full Linux desktop, GUI tools (IGV, RStudio, CellProfiler) | Yes (ThinLinc client) |

Pick the method that fits your workflow and follow the instructions below:

Recommended starting point. Open OnDemand provides a web portal with a file browser, terminal, job submission forms, and interactive apps like JupyterLab and RStudio. No software to install.

Navigate to the OOD portal

Go to https://gateway.<cluster>.rcac.purdue.edu and log in with your Purdue (BoilerKey) credentials.

| Cluster | OOD URL |

|---|---|

| Gautschi | gateway.gautschi.rcac.purdue.edu |

| Negishi | gateway.negishi.rcac.purdue.edu |

| Bell | gateway.bell.rcac.purdue.edu |

| Gilbreth | gateway.gilbreth.rcac.purdue.edu |

Tour the dashboard

After login you will see the OOD dashboard with these key sections:

Open a terminal

Click Clusters in the top menu and select the cluster shell access (e.g., Gautschi Shell Access). A terminal opens in your browser. You are now on a login node.

SSH gives you direct terminal access. It is built into macOS and Linux. On Windows, use PowerShell, WSL, PuTTY, or MobaXterm.

ssh <boilerid>@gautschi.rcac.purdue.eduReplace <boilerid> with your Purdue career account username. For other clusters, replace gautschi with the cluster name.

ThinLinc provides a full Linux desktop environment, ideal for GUI-based tools like IGV, CellProfiler, MEGA, or any application that needs a graphical interface.

Install the ThinLinc client

Download and install the ThinLinc client for your operating system from the ThinLinc download page.

Connect to the cluster

Open the ThinLinc client and enter the connection details:

| Field | Value |

|---|---|

| Server | desktop.<cluster>.rcac.purdue.edu |

| Username | Your Purdue career account (BoilerID) |

| Password | Your Purdue password |

For example, to connect to Gautschi: desktop.gautschi.rcac.purdue.edu

Authenticate

After entering your credentials, you will be prompted for Duo two-factor authentication. Approve the push notification or enter the code.

Use the desktop

You now have a full Linux desktop. Open a terminal from the Applications menu to run commands, or launch GUI applications directly.

You can also access ThinLinc through a web browser (no client install needed) at https://desktop.<cluster>.rcac.purdue.edu/. The native client generally provides a smoother experience.

For detailed ThinLinc documentation, see the RCAC ThinLinc guide.

RCAC deploys bioinformatics tools as BioContainers, pre-built Apptainer containers accessed through the Lmod module system. You do not need to install most tools yourself.

Always start with module --force purge before loading any modules. This removes all previously loaded modules, including sticky system modules that can cause conflicts with containerized tools.

module --force purgemodule load biocontainersThe biocontainers module unlocks all bioinformatics software. You must load it before any bioinformatics tool module becomes visible.

# Search for a specific toolmodule spider samtools

# Get loading instructions for a specific versionmodule spider samtools/1.21

# List all available biocontainer modulesmodule --force purgemodule load biocontainersmodule availmodule spider searches all modules, including those not yet visible. It shows available versions and prerequisites.

module --force purgemodule load biocontainers samtools/1.21samtools --versionAfter loading, run the tool as usual. Behind the scenes, RCAC creates shell function wrappers that transparently route your command through the container. You do not need to interact with Apptainer/Singularity directly.

| Command | Purpose |

|---|---|

module --force purge | Clean your environment (always do this first) |

module load biocontainers | Enable access to bioinformatics tools |

module spider <tool> | Search for a tool and its available versions |

module spider <tool>/<version> | Show loading instructions for a specific version |

module avail | List all currently loadable modules |

module list | Show what is currently loaded |

module load biocontainers <tool>/<version> | Load a specific tool |

module show <tool>/<version> | See what environment variables a module sets |

When you load a biocontainer module, RCAC creates a shell function that wraps the tool command in an apptainer run call. For example, loading bwa creates a function so that typing bwa actually runs:

singularity run /apps/biocontainers/images/<container>.sif bwa "$@"For most use cases this is invisible. If you need more detail, see Understanding the wrapper in the Running Bioinformatics guide.

If a tool is not available via module spider after loading biocontainers, you can request it:

While waiting, you can install the tool yourself using Conda or by pulling a custom container. See How Do I Find and Run Software X? for the full decision tree.

SLURM (Simple Linux Utility for Resource Management) is the job scheduler on all RCAC clusters. It manages the queue so that all users get fair access to compute resources.

The mental model: you describe what resources your job needs (CPUs, memory, time), submit it to the queue, and SLURM runs it on a compute node when resources are available.

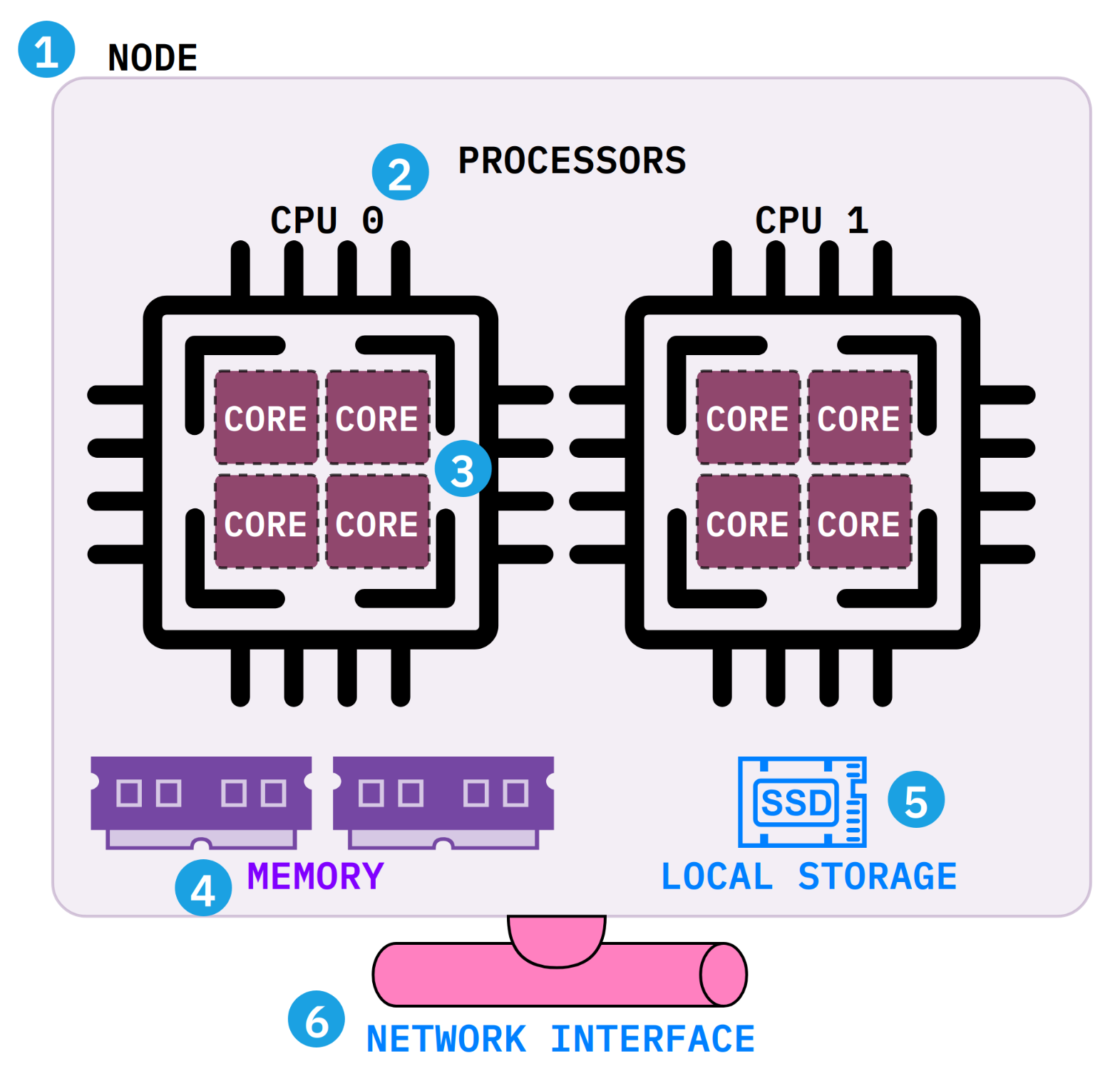

Before writing your first job script, it helps to understand what a compute node actually contains. Each SLURM directive maps to a physical component inside the node.

--nodes=1).--cpus-per-task).--mem (e.g., --mem=32G).$TMPDIR) for scratch files during a job. It is deleted when the job ends.$HOME, $RCAC_SCRATCH) where your data lives.Interactive jobs give you a shell on a compute node for testing, debugging, and exploratory work. Use them when you need to try commands before writing a batch script.

sinteractive -A <account-name> -n 4 -N 1 -t 1:00:00| Flag | Meaning |

|---|---|

-A <account-name> | Your allocation/account (check with slist) |

-n 4 | Number of CPU cores |

-N 1 | Number of nodes (almost always 1) |

-t 1:00:00 | Wall time (hours:minutes:seconds) |

Once the session starts, you are on a compute node and can load modules, run tools, and test your workflow. Type exit when done to release the resources.

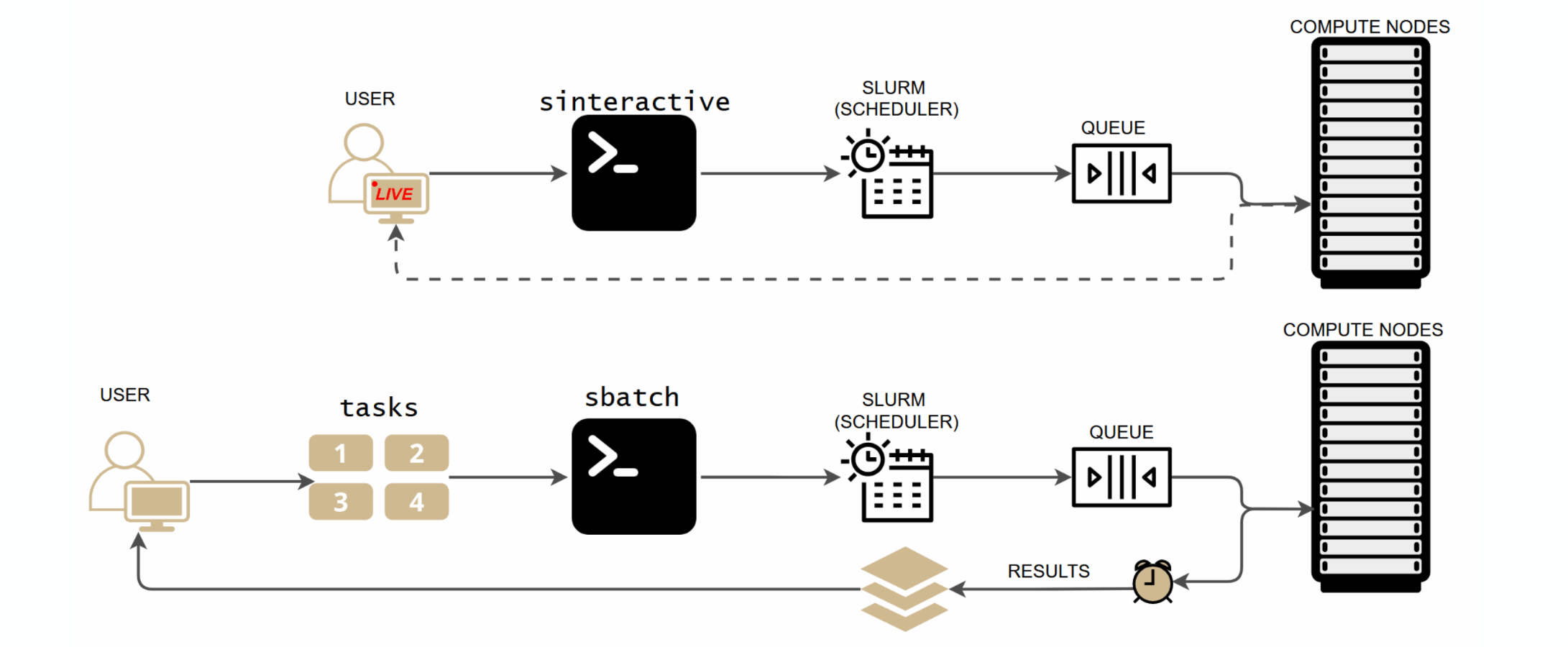

SLURM offers two ways to run work on compute nodes. Interactive (sinteractive) gives you a live terminal session for testing and exploration. Batch (sbatch) submits a script that runs unattended, and you collect results when it finishes. Use interactive mode to develop your workflow, then switch to batch for production runs.

For real workloads, write a batch script and submit it with sbatch. The job runs unattended on a compute node; you collect results when it finishes.

A SLURM batch script has three parts:

#!/bin/bash#SBATCH directives: resource requests (parsed by SLURM, not executed by bash)#!/bin/bash#SBATCH --job-name=my_analysis#SBATCH --account=<account-name>#SBATCH --partition=<partition-name>#SBATCH --nodes=1#SBATCH --ntasks=1#SBATCH --cpus-per-task=8#SBATCH --time=04:00:00#SBATCH --mem=32G#SBATCH --output=%x_%j.out#SBATCH --error=%x_%j.err

module --force purgemodule load biocontainers fastqc/0.12.1

fastqc --outdir results/ --threads ${SLURM_CPUS_ON_NODE} *.fastq.gzSome tools (e.g., Helixer, AlphaFold, deep learning frameworks) require GPU resources. Request GPUs with the --gpus-per-node directive and use the appropriate GPU partition.

#!/bin/bash#SBATCH --job-name=gpu_analysis#SBATCH --account=<account-name>#SBATCH --partition=gpu#SBATCH --nodes=1#SBATCH --ntasks=1#SBATCH --cpus-per-task=8#SBATCH --gpus-per-node=1#SBATCH --time=04:00:00#SBATCH --mem=32G#SBATCH --output=%x_%j.out#SBATCH --error=%x_%j.err

module --force purgemodule load biocontainers helixer/0.3.4

Helixer.py --fasta-path genome.fa --lineage land_plant --gff-output-path helixer.gff3Key differences from CPU jobs:

--partition=gpu (check sfeatures for available GPU partitions on your cluster)--gpus-per-node=1 (or more, depending on your tool)--constraint or --gres=gpu:<type>:1 if neededSubmit and monitor:

# Submit the jobsbatch slurm_cpu.sh

# Check your jobssqueue -u ${USER}

# Cancel a jobscancel <jobid>

# Check resource usage after completionsacct -j <jobid> --format=JobID,JobName,MaxRSS,Elapsed,State,ExitCode

# Detailed job report (walltime, memory, CPU efficiency)jobinfo <jobid>#SBATCH directive | Description | Typical value |

|---|---|---|

--account | Allocation to charge | Check with slist |

--partition | Queue/partition | Cluster-specific (e.g., cpu, gpu) |

--nodes | Number of nodes | 1 (almost always for bioinformatics) |

--ntasks | Number of processes | 1 for single tools |

--cpus-per-task | Threads per process | Match tool’s thread flag (4 to 32) |

--time | Wall clock limit | Start generous, tighten after sacct |

--mem | Total memory | Check tool docs; start with 16 to 32G |

--gpus-per-node | GPUs per node (GPU jobs only) | 1 for most tools |

--output | stdout log file | %x_%j.out (job name + job ID) |

--error | stderr log file | %x_%j.err |

| Command | Purpose |

|---|---|

slist | Show your accounts, available partitions, and resource limits |

sfeatures | Show available hardware features (CPU types, GPUs, memory per node) |

squeue -u ${USER} | Check status of your jobs |

scontrol show job <jobid> | Detailed info about a specific job |

sacct -j <jobid> | Job history, resource usage, exit status |

jobinfo <jobid> | Friendly summary: walltime, memory, CPU efficiency, disk I/O |

scancel <jobid> | Cancel a running or queued job |

sinteractive | Launch an interactive session |

For the full SLURM guide including array jobs, Conda in SLURM, debugging failed jobs, and common pitfalls, see Submitting SLURM Jobs in the Running Bioinformatics guide.

RCAC provides multiple storage tiers. Understanding when to use each one prevents quota issues and data loss.

| Storage | Path | Capacity | Persistence | Best for |

|---|---|---|---|---|

| Home | $HOME | ~25 GB | Permanent, nightly snapshots | Scripts, configs, small critical files |

| Scratch | $RCAC_SCRATCH | Very large (100+ TB shared) | Purged after 60 days of inactivity | Active analysis, intermediate files, job outputs |

| Depot | /depot/<group>/ | PI-purchased (1 TB increments) | Permanent, backed up, no purge | Shared lab data, raw sequences, final results |

| Node-local | $TMPDIR | Varies by node | Deleted when job ends | Fast temporary files within a single job |

$TMPDIR on the compute node. Fastest I/O, automatically cleaned.These commands help you check storage consumption and available compute resources. Run them on a login node.

| Command | What it shows | When to use |

|---|---|---|

myquota | Disk usage and limits for Home, Scratch, and Depot | Before starting a large analysis; regularly to avoid quota surprises |

userinfo ${USER} | Your accounts, quotas, group memberships, and active sessions in one view | Quick overview of your entire cluster profile |

slist | Your accounts, available partitions, and resource limits | To find your --account name for #SBATCH directives |

sfeatures | Node hardware: CPU types, core counts, memory, GPUs | To right-size --cpus-per-task, --mem, and --gres requests |

showpartitions | Partition time limits, node counts, and access policies | To choose the right --partition for your job |

purgelist | Files on Scratch scheduled for purge | To check if any of your files are about to be deleted |

For a detailed guide on project directory structure, naming conventions, and archiving, see Project Organization for Bioinformatics on HPC.

Moving data to and from the cluster is one of the first things you will need to do. Use the right tool for the job size.

| Method | Best for | Key advantage |

|---|---|---|

| Globus | Large datasets (GBs to TBs) | Auto-resume, integrity verification, fire-and-forget |

| rsync | Directory sync, incremental backups | Only transfers changed files |

| scp | Quick single-file transfers | Simple, no setup needed |

| OOD File Browser | Small files, drag-and-drop | No command line needed |

Globus is a managed file transfer service designed for research data. It handles large transfers reliably with automatic retry, checksum verification, and the ability to close your laptop while the transfer runs.

Access the Globus transfer portal

Go to transfer.rcac.purdue.edu and sign in with your Purdue (BoilerKey) credentials.

Find the cluster endpoint

In the Collection search field, type the cluster name (e.g., “Gautschi”). The RCAC endpoint will appear.

Select it and enter the path you want to access. For example:

/scratch/gautschi/<username>//depot/<group>/Set up the other side

In the second panel, search for your source or destination:

Start the transfer

Select files or directories on each side and click Start. Globus handles the rest. You will receive an email notification when the transfer completes.

rsync is a command-line tool that efficiently synchronizes files and directories. It only transfers files that have changed, making it ideal for keeping directories in sync and resuming interrupted transfers.

# From your local computerrsync -avzP /local/path/to/data/ <boilerid>@gautschi.rcac.purdue.edu:/scratch/gautschi/<username>/project/data/# From your local computerrsync -avzP <boilerid>@gautschi.rcac.purdue.edu:/scratch/gautschi/<username>/project/results/ /local/path/to/results/From a login node on one cluster, rsync to another:

rsync -avzP /scratch/gautschi/${USER}/project/ ${USER}@negishi.rcac.purdue.edu:/scratch/negishi/${USER}/project/| Flag | Meaning |

|---|---|

-a | Archive mode (preserves permissions, timestamps, symlinks) |

-v | Verbose output |

-z | Compress data during transfer |

-P | Show progress and enable resume of partial transfers |

For copying a single file or a small number of files:

scp myfile.txt <boilerid>@gautschi.rcac.purdue.edu:/scratch/gautschi/<username>/scp <boilerid>@gautschi.rcac.purdue.edu:/scratch/gautschi/<username>/results.csv ./scp -r mydir/ <boilerid>@gautschi.rcac.purdue.edu:/scratch/gautschi/<username>/For small files, the OOD file browser provides drag-and-drop upload and download directly in the browser. Navigate to Files in the OOD dashboard, browse to your target directory, and use the Upload/Download buttons.

For specialized use cases:

gautschi with your cluster)Now that you can connect, run software, submit jobs, and transfer data, explore these topics to level up:

RCAC HPC Exchange

Knowledgebase, tips, and training for common HPC tasks on RCAC clusters. Browse the exchange →

Running Bioinformatics on RCAC

Deep dive into biocontainers, Conda environments, custom containers, array jobs, and debugging failed jobs. Read the guide →

Productivity Toolkit

SSH keys, SSH config shortcuts, and shell customization for faster daily workflows. Read the guide →